Automated Custom Scraper for Tokyo Real Estate

Been thinking about buying a house.

The problem: Tokyo listings are information-dense, full of terms I don’t understand yet… and I’m notoriously…’frugal’ 🤣

So I built a real estate monitoring agent with Claude Code.

What it does (while I’m making coffee each morning):

- Pulls 600+ real estate listings across 6 Tokyo wards/cities from Japan’s major property portals

- Scores each listing against *my* criteria: ¥/m² vs. local comps, station distance, neighborhood preferences, lot size, and custom build feasibility

- Flags the hidden stuff—restrictions, property rights, and other gotchas that usually waste my time

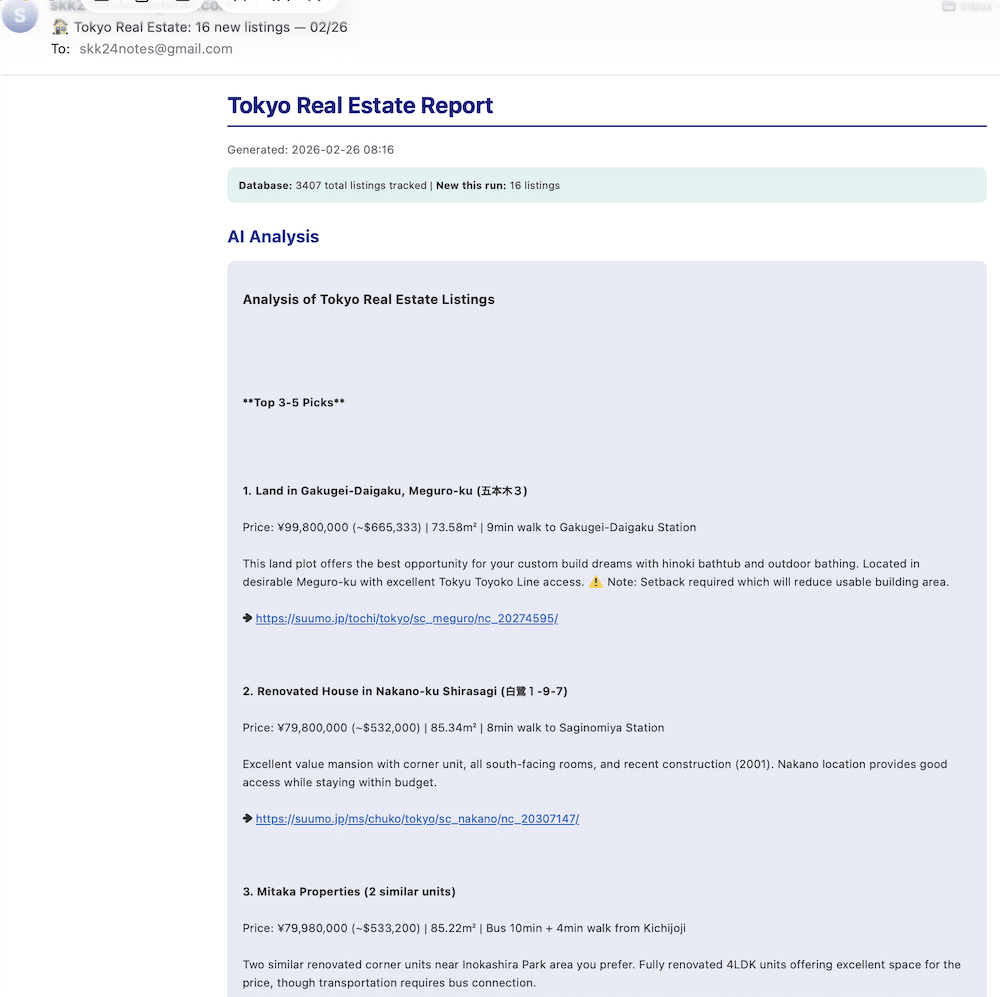

- Generates a short analyst-style briefing on the best opportunities + mortgage estimates vs. my current rent

- Emails me a clean HTML report with recommendations + clickable links to each listing.

Result: instead of doom-scrolling real estate sites, I see 5-10 genuinely interesting new options.

Yes, SUUMO can email you listings. The difference is the analysis: not “cheap,” but a value score I derived that answers: “is this listing overpriced or underpriced for this neighborhood?”—and then weighs constraints and tradeoffs. Surprisingly, I’ve found that most great-looking listings get filtered out by constraints, not price.

I’m not using AI to *pick* the house. I’m using it to reduce noise so I can use my own judgment.

How it was built:

In a couple of Claude Code sessions (including some iterative debugging), I went from “I need a better way to search Tokyo housing” to a working system: scraper → database → scoring engine → AI analysis → email report.

Stack: Python, BeautifulSoup, SQLite, Anthropic API, cron.

I’m not in a rush to buy—but this turned overwhelm into enough clarity to go get a mortgage pre-approval.

(Originally published on LinkedIn, here.)